Physical AI

NVIDIA Cosmos

An open platform for physical AI with world foundation models (WFMs), video data processing libraries, video evaluation, and post-training frameworks.

World Foundation Models

Open Models for World Generation and Understanding

Cosmos Predict

Leading world generation model, adaptable to any physical AI task or environment.

Generate 30s predictive video worlds from text, image, or video with 2B/14B models, or post-train on your data to create custom edge cases, closed-loop policies, and multiview, robot-centric simulations.

Cosmos Transfer

Multicontrol model for simulation to photoreal transformation.

Pair with physical AI simulation frameworks, such as CARLA or NVIDIA Isaac Sim™, to accelerate synthetic data generation across various environments and lighting conditions.

Cosmos Reason

Leading vision language model (VLM) enabling robots and vision AI agents to reason like humans.

Combines prior knowledge, physics, and common sense for real-time alerts and actionable insights across public safety, traffic monitoring, logistics, quality inspection, and physical AI.

Data Processing and Evaluation

Speed up efficient dataset processing and evaluation.

Use Cases

How Cosmos Accelerates AI Across Industries

-

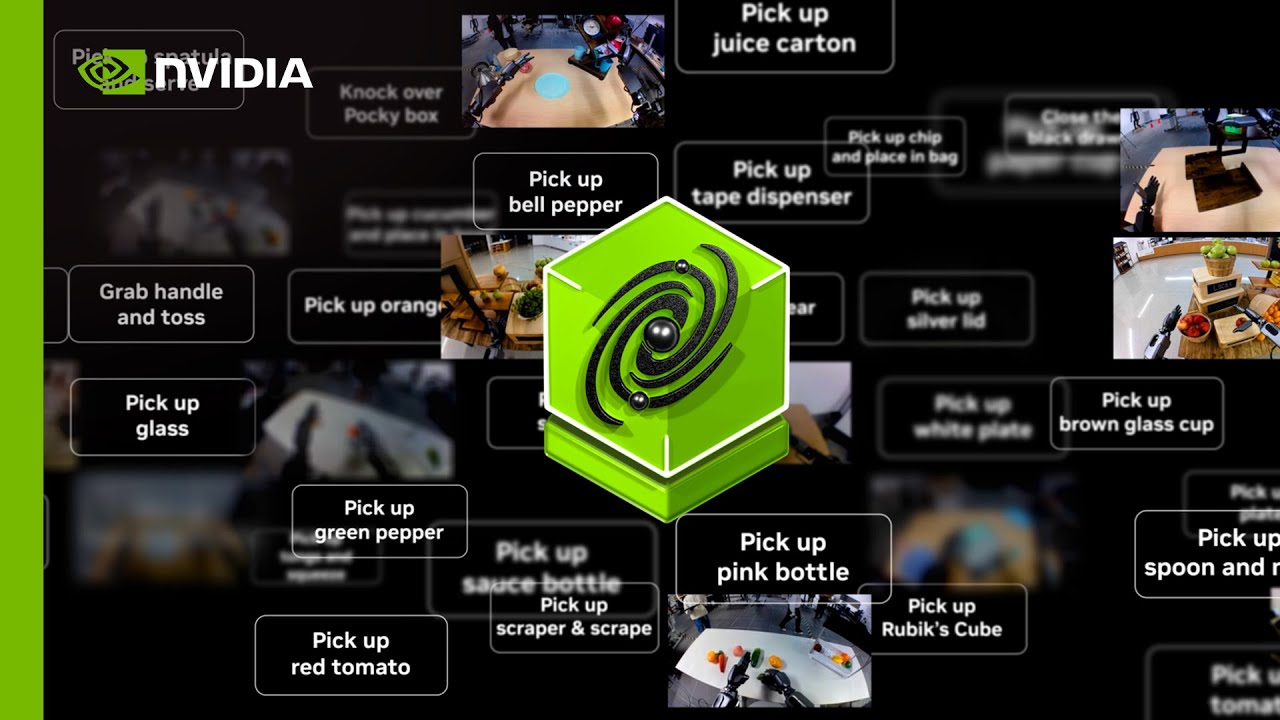

Robot Learning

-

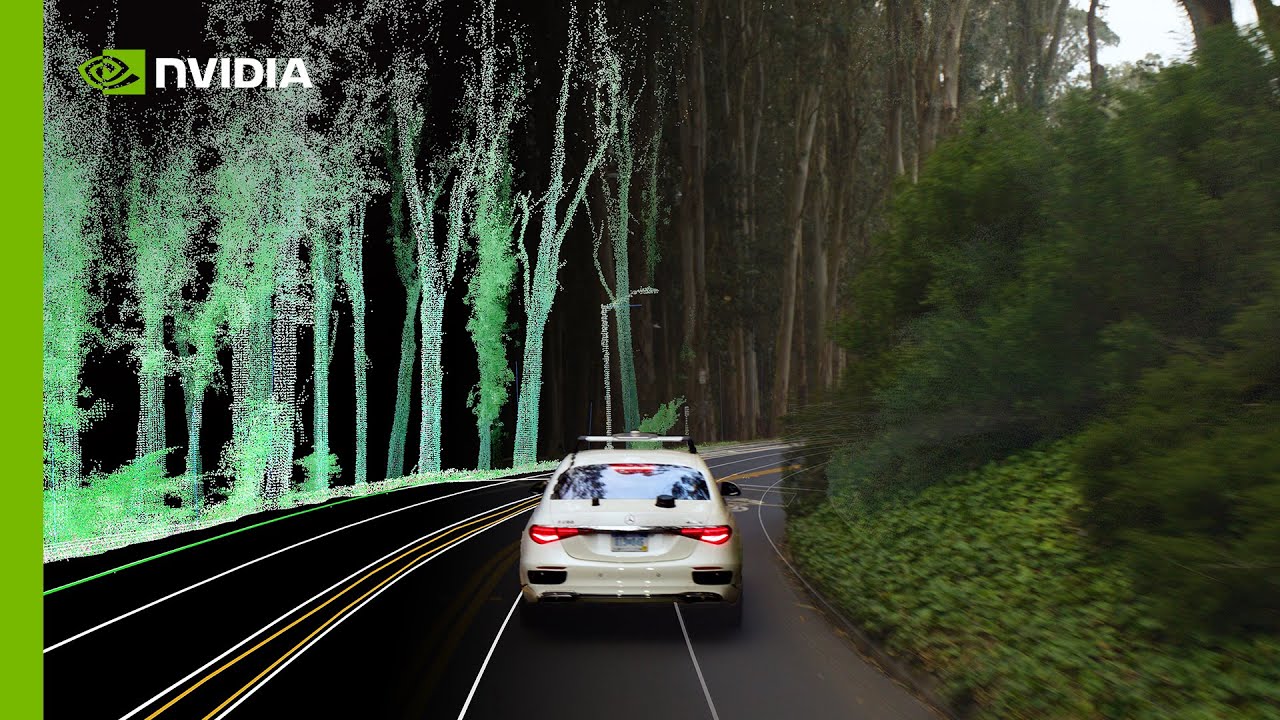

Autonomous Vehicle Training

-

Video Analytics AI Agents

Robot Learning

Build custom world models for downstream tasks, environments, camera or sensor layouts, and policies.

- Post-train Cosmos Predict for robot-specific views or control policies

- Generate synthetic data across environments and lighting conditions with Cosmos Transfer

- Post-train Cosmos Reason using the Cosmos RL framework to build vision-language-action (VLA) models

- Create an end-to-end synthetic data augmentation and evaluation pipeline using the Physical AI Data Factory Blueprint built on Cosmos

Autonomous Vehicle Training

Generate custom, diverse, and high-fidelity sensor data for safely training, testing, and validating autonomous vehicles.

- Amplify existing data diversity with new weather, lighting, and geolocation data using Cosmos Transfer

- Expand into multi-sensor views using Cosmos Predict

- Create an end-to-end synthetic data augmentation and evaluation pipeline using the Physical AI Data Factory Blueprint built on Cosmos

Video Analytics AI Agents

Enhance automation, safety, and operational efficiency across industrial and urban environments.

With Cosmos Reason, AI agents can analyze, summarize, and interact with real-time or recorded video streams to:

- Deliver real-time question-answering and alerts

- Provide rich contextual insights

- Extract insights from large-scale video data with NVIDIA Blueprint for video search and summarization

Starting Options

Get Started With NVIDIA Cosmos

AI Infrastructure

Get the Best Performance With NVIDIA Blackwell

NVIDIA RTX PRO 6000 Blackwell Series Servers accelerate physical AI development for robots, autonomous vehicles, and AI agents across training, synthetic data generation, simulation, and inference.

Unlock peak performance for Cosmos world foundation models on NVIDIA Blackwell GB200 for industrial post-training and inference workloads.

Ecosystem

Adopted by Leading Physical AI Innovators

Model developers from the robotics, autonomous vehicles, and vision AI industries are using Cosmos to accelerate physical AI development.

Next Steps

Resources

The Latest From Cosmos Developers

Frequently Asked Questions

[January 22, 2026] Released research on Cosmos Policy that builds on Cosmos Predict-2 for visuomotor control and planning.

[February 9, 2026] Enhanced compute support, quantization and CUDA compatibility for new Cosmos Reason 2.

[December 19, 2025] Released Cosmos-Predict2.5-2B Diffusers support via Hugging Face, Cosmos-Predict2.5-2B Text2World distilled checkpoint on Hugging Face and Distillation guide.

[December 19, 2025] Released Image2Image and ImagePrompt capabilities for Cosmos Transfer 2.5. See the inference guide here.

Explore GitHub for more.

Cosmos WFMs are available under an NVIDIA Open Model License for all.

Refer to the new Cosmos Cookbook, which contains step-by-step recipes and post-training scripts to quickly build, customize, and deploy NVIDIA’s Cosmos world foundation models for robotics and autonomous systems.

Yes, you can leverage Cosmos to build from scratch with your preferred foundation model or model architecture. You can start by using Cosmos Curator for video data preprocessing. Then compress and decode your data with Cosmos tokenizer. Once you have processed the data, you can train or fine-tune your model.

Using NVIDIA NIM™ microservices, you can easily integrate your physical AI models into your applications across cloud, data centers, and workstations.

You can also use NVIDIA DGX Cloud to train AI models and deploy them anywhere at scale.

All three are WFMs with distinct roles:

- Cosmos Predict generates diverse video scenes from text, image, or video prompts—ideal for post-training on subjects like robots or self-driving cars.

- Cosmos Transfer applies multi-control style transfer—changing lighting and environments—on physics-based videos, often created in simulators like NVIDIA Omniverse™.

- Cosmos Reason answers queries by reasoning over video and image inputs. Cosmos Reason can generate new and diverse text prompts from one starting video for Cosmos Predict, or critique and annotate synthetic data from Predict and Transfer.

Omniverse creates realistic 3D simulations of real-world tasks by using different generative APIs, SDKs, and NVIDIA RTX rendering technology.

Developers can input Omniverse simulations as instruction videos to Cosmos Transfer models to generate controllable photoreal synthetic data.

Together, Omniverse provides the simulation environment before and after training, while Cosmos provides the foundation models to generate video data and train physical AI models.

Learn more about NVIDIA Omniverse.